Nvidia keeps giving Wall Street everything it wants — without getting rewarded

Yet another case of good financial news from Nvidia failing to generate an enduring positive reaction.

At Nvidia’s GPU Technology Conference, CEO Jensen Huang stressed that the chip designer could be everything to everyone in AI.

And that, along with a mammoth revenue outlook, was everything that Wall Street wanted to hear.

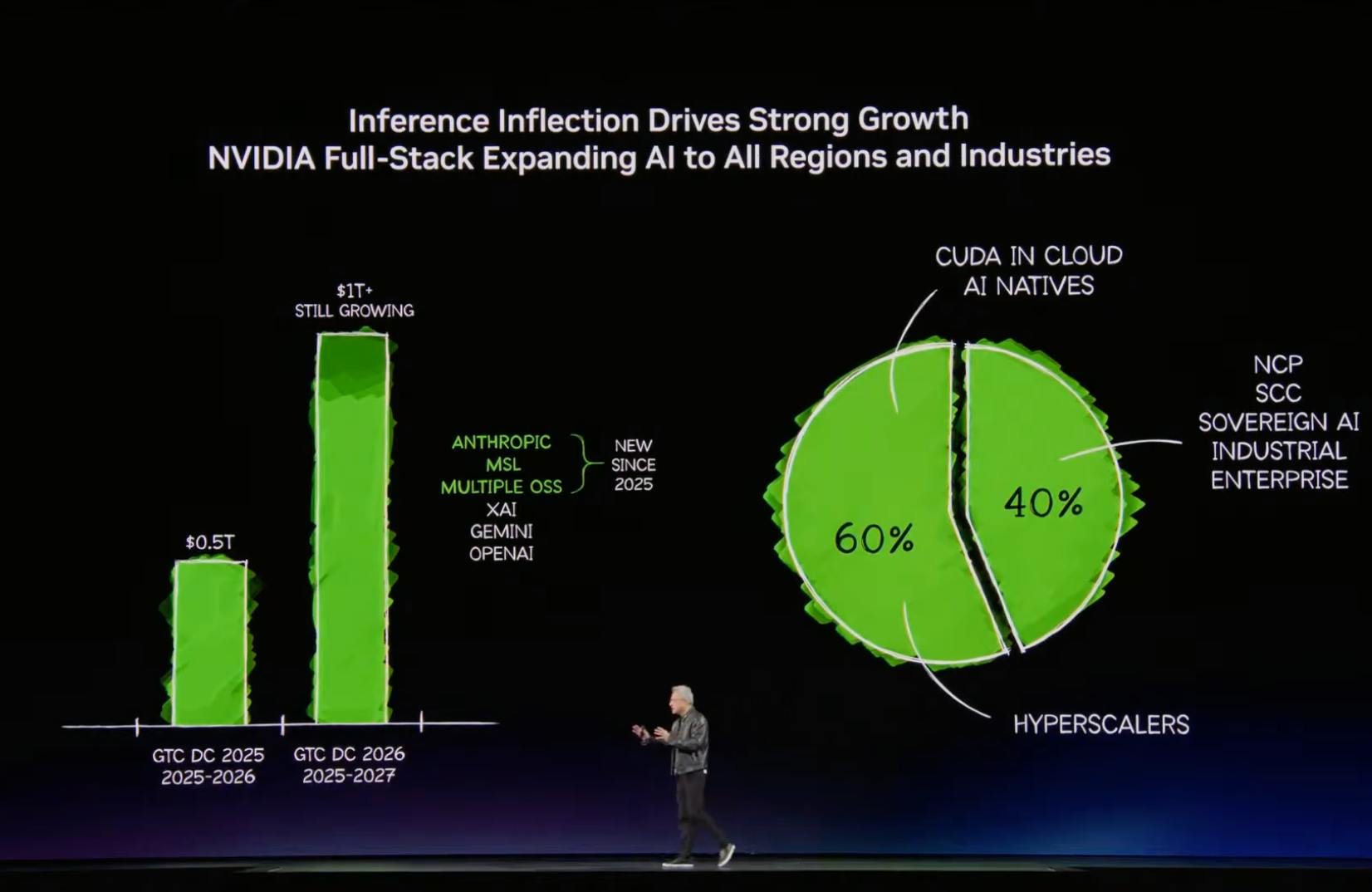

During his keynote, Huang repeatedly said that the chip designer is both vertically integrated (that is, offers all the solutions you need, not just GPUs) and also horizontally open (read: will integrate its offerings into whatever your technology stack happens to be). The headline, however, was his proclamation that AI chip sales would be at least $1 trillion through 2027.

Per analysts, Nvidia clarified that this $1 trillion guidance applies only to sales of Blackwell (which started shipping in its fiscal Q4 2025, roughly calendar Q4 2024) and Rubin chips, as well as associated networking equipment and CPUs, but not other products that were discussed at GTC. Therefore, the Street anticipates that overall data center sales are likely to come in well above that milestone figure.

Bottom line: no matter how you want to slice it, this number — and its implications for total revenues through calendar year 2027 — is an unmitigated thumbs-up relative to the consensus estimate.

But it’s yet another case of good financial news from Nvidia failing to generate an enduring positive reaction. Shares briefly spiked to session highs after Huang’s revenue guidance, but quickly lost all that advance and closed below where they were trading when the presentation started.

(That’s still better than the stock has done during most high-profile events recently.)

Here’s what analysts had to say about Huang’s keynote:

JPMorgan’s Harlan Sur, “overweight” rating, price target of $265:

“Net, while the market debate has shifted to AI spending cycle duration, we believe NVDA’s vertically integrated platform (now spanning seven chips, five rack systems, and the software stack to tie them together) is difficult to replicate, and the combination of accelerating inference demand, a structurally expanding TAM [total addressable market] via traditional workload acceleration, and a broadening customer base supports a more durable cycle than the market is currently underwriting.”

“NVDA’s Groq 3 LPU integration with Vera Rubin was the most architecturally significant product announcement — a disaggregated inference architecture that pairs Rubin GPUs (high throughput) with Groq LPUs (low latency decode) and positions NVDA to effectively service the low-latency inference market (where ASICs have traditionally held an advantage).”

Bernstein’s Stacy Rasgon, “outperform” rating, price target of $300:

“NVIDIA’s full platform approach appears increasingly difficult to disrupt as they relentlessly build out their software and hardware stacks across multiple offerings including GPUs, CPUs, DPUs, (now) LPUs, networking, and storage, and continue to drive token costs down by orders of magnitude with every generation which should allow them to capitalize as inference computing exponentiates; frankly we increasingly wonder how anyone else can compete with this.”

“NVIDIA’s roadmap looks really solid, their capability gap continues to widen, new offerings ought to help cement their position in inference just as they dominate training, and the order book suggests further upside to numbers, with the stock (in our opinion) almost absurdly valued given their positioning.”

Bank of America’s Vivek Arya, “buy” rating, price target of $300:

“1 gigawatt of data center now represents ~$40 billion of capex, with NVDA addressing $20-30 billion depending on networking content. Overall, we see continued NVDA leadership in AI backed by its broadening full-stack end-to-end pipeline, extreme co-design with customers, and supply assurance.”

Wedbush Securities’ Dan Ives, “outperform” rating, price target of $300:

“GTC 2026 was another opportunity for Jensen & Co. to further separate from the field in the AI arms race and they delivered, further reinforcing that Nvidia sits alone at the top of the AI mountain with the entire tech world watching below.”

“Inference has emerged as a dominant demand driver, with the GB200 NVL72 delivering up to 50x performance per watt and 35x lower cost per token compared to Hopper, making it the clear architecture of choice for enterprises scaling agentic AI workloads. With agentic systems now generating tokens at an exponential rate and the Groq licensing agreement set to unlock new levels of low-latency inference performance, the GB200 NVL72 sits at the center of the most important infrastructure buildout in the history of technology and NVDA’s inference leadership is only widening.”

Morgan Stanley’s Joseph Moore, “overweight” rating, price target of $260:

“We note that NVIDIA has consistently put up more upside to quarterly guidance than either of these competitors, and while just how strong 2027 is remains to be seen, we believe that NVIDIA’s forecast leaves the most room for upside in the base case. Market share in this environment has more to do with supply chain, and we increasingly see potential for bottlenecks in other parts of the supply chain may actually bring preference to NVIDIA given a non supply limitation on GPU/XPU.

That’s especially relevant when the stock is trading at ~17x EPS [earnings-per-share] numbers that are likely to have material upside, discounting a falloff in earnings not yet in evidence. It’s certainly true that a semiconductor company’s views about demand 24 months into the future should be taken with a healthy dose of skepticism, but given bottlenecks in AI around land, power, and space, Nvidia’s customers are making planning decisions and commitments that far into the future, so it makes sense Nvidia is getting that visibility as well.”